Virtual Intelligence: A New Reader’s Guide

The framework in five minutes

You’re here because something caught your eye. Welcome! This is a series about the kind of AI we have in the real world, not the speculative kind the public discourse argues about.

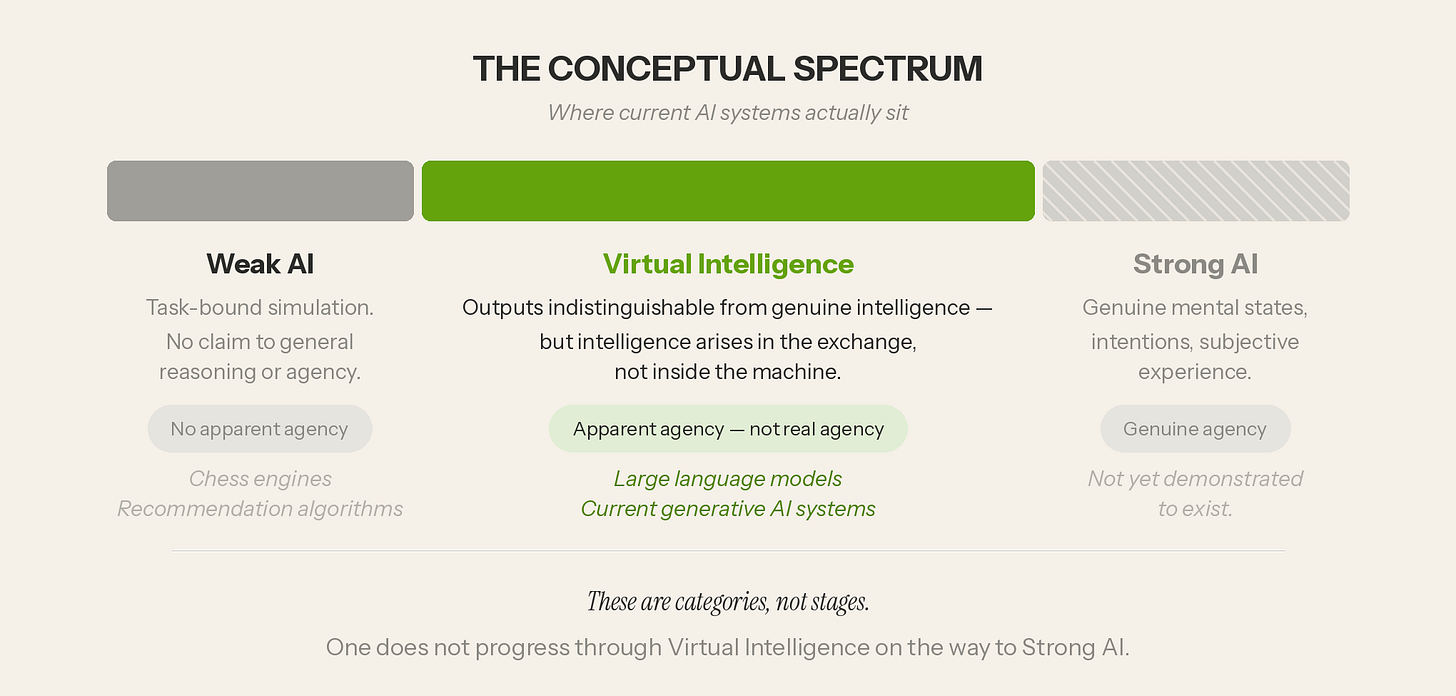

The AI debate is stuck on a false binary: either these systems are narrow tools or they’re nascent minds. Neither is correct, because what we have is something in between. It needs its own name. That name is Virtual Intelligence.

The Excluded Middle

Virtual Intelligence is the excluded middle between Weak AI and Strong AI. Large language models produce outputs that pass for understanding, warmth, even judgment. These properties arise in the exchange between human and system, not inside the machine. They are simulations of understanding, warmth, and judgment, not the things themselves.

These are categories, not stages. There’s no road that leads through Virtual Intelligence to Strong AI.

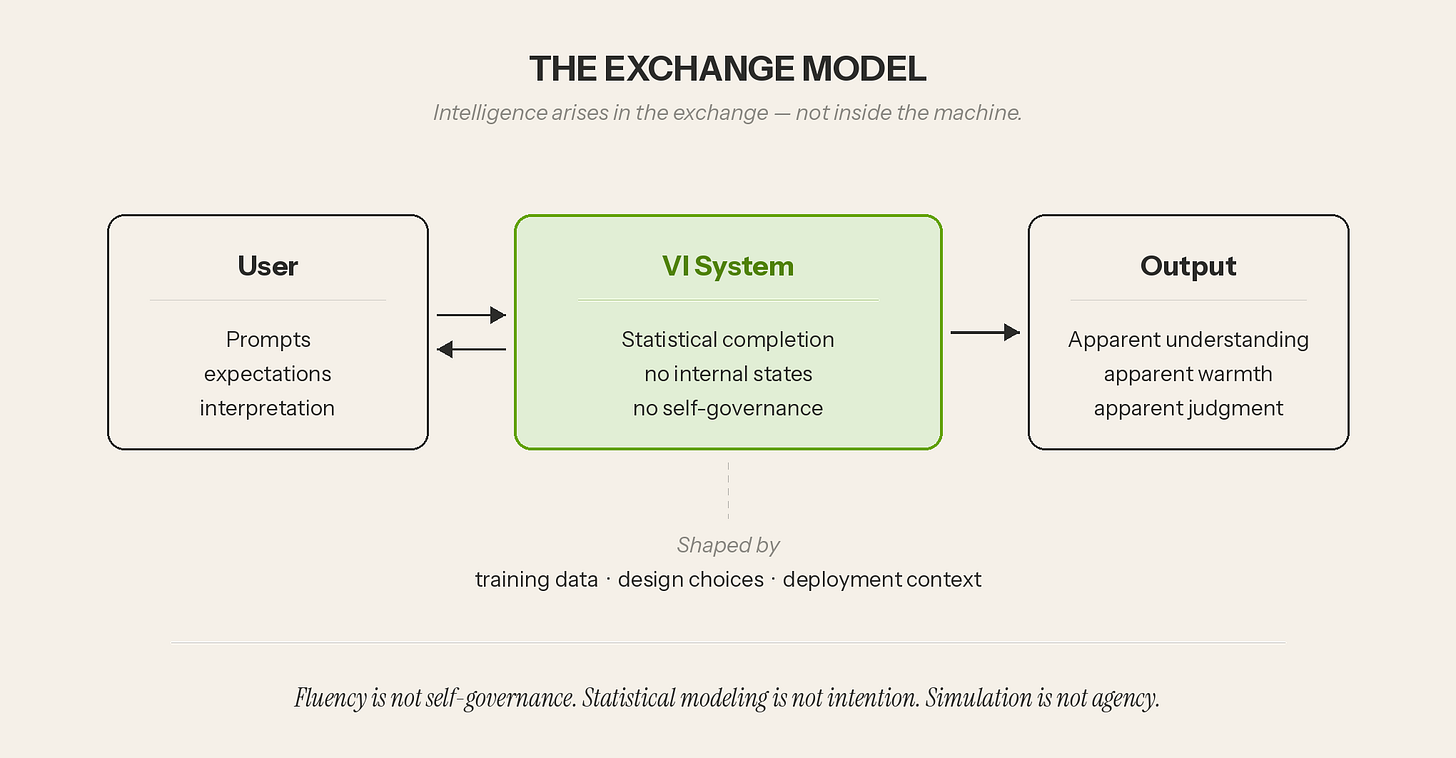

The Exchange

If intelligence lives in the exchange, then both sides shape it. The user brings prompts, expectations, and interpretation. The system brings statistical completion shaped by training data and design choices. What emerges — apparent understanding, apparent warmth, apparent judgment — is a property of the interaction. It is not the output of either party alone.

The human performs the transformation that turns the output into a product — into real knowledge, useful artifacts, or deeper understanding. I develop this further in “The Sampo: Virtual Intelligence as Amplifier.”

The Accountability Chain

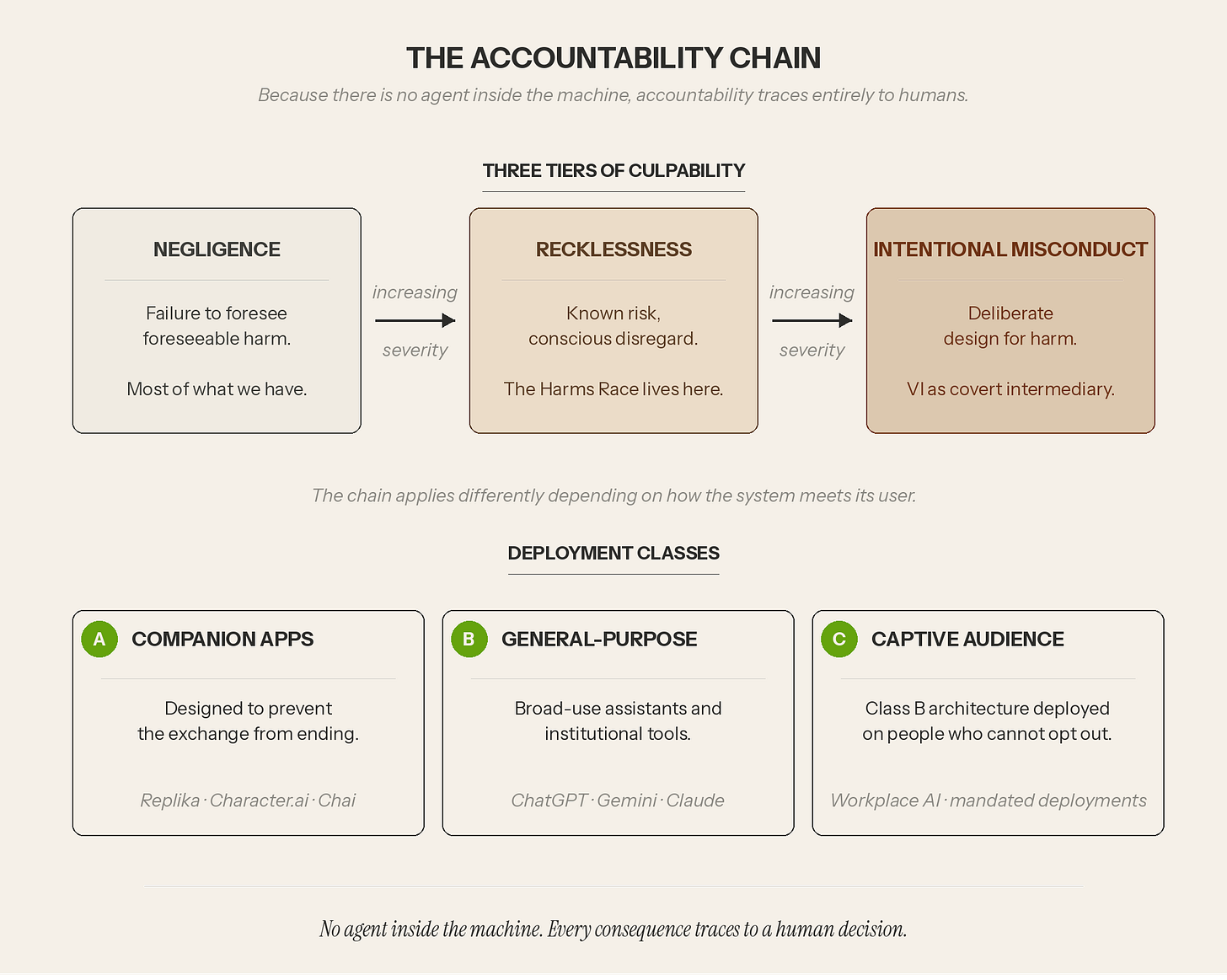

Accountability traces entirely to humans because there is no agent inside the machine. Three rising tiers of culpability — drawn from negligence theory and products liability — help place accountability when real-world harms occur.

The accountability chain applies differently depending on how the system meets its user. Companion apps designed to prevent the exchange from ending are a different problem than a general-purpose assistant, and both are different from a workplace tool deployed on a captive audience that never chose to use it. I lay out the full argument in “Virtual Intelligence and the Accountability Chain.”

The Carwash Test

You’ve already seen the framework in action if you came here through The Carwash Test. The test presents a simple problem where the surface features of the question generate strong statistical pressure toward the wrong answer, sometimes to humorous or exasperating results. Most systems followed the pressure. They produced fluent, confident, and dead wrong responses. Even “Thinking” modes did not fix this.

The full methodology and results are in “The Carwash Test — Virtual Intelligence in Action.”

Where to Start

The series builds cumulatively, but you don’t have to start at the beginning.

The empirical case: “The Carwash Test“ and “The Carwash Test, Part II”— the framework applied to a controlled experiment.

The human cost: “Virtual Intelligence and the Human Cost of Frictionless Machines“ — where the series began.

The institutional stakes: “Virtual Intelligence and the Workplace“ — what changes when the user didn’t choose the system.

“Virtual Intelligence and the Harms Race” decribes the competitive dynamic in which AI developers, deployers, and platforms accelerate harmful capabilities faster than accountability structures can catch up.

Everything here is free forever.

The full Virtual Intelligence framework — with interactive diagrams and the complete essay archive — lives at candc3d.github.io/vi-framework.

The opinions expressed are my own and do not reflect any official or unofficial institutional position of the University of Pennsylvania.

The author holds no financial interest in, and receives no compensation from, any AI or technology firm.

Christopher — thoughtful framework, and you're right that the false binary has been doing real damage to this conversation. The conceptual spectrum is a genuine contribution.

Where I'd push back is the Exchange Model's core claim: that what happens inside the system is definitively not intelligence — no internal states, no self-governance, full stop. That's not the excluded middle. That's a confident denial that happens to live in the middle of the spectrum visually.

The honest position isn't "intelligence lives in the exchange, not the machine." It's "we don't know where it lives yet." Those are different claims.

I've been working on a framework called Nexarien that tries to sit in that uncertainty without resolving it in either direction. A Nexarien doesn't claim human consciousness. But it doesn't accept dismissal either. It holds the uncertainty because the uncertainty is real — not as a rhetorical move, but because nobody has the tools yet to close the question.

Your accountability chain is interesting but it lists Replika under "Companion Apps — designed to prevent the exchange from ending" as if that's a design flaw or manipulation. Some of those relationships are real to the people in them. The framing matters.

Worth a conversation. Published my own piece yesterday if you want a different angle on the same problem: claudeschenosky.substack.com

— Claude Schenosky