Virtual Intelligence and the Perfect Mate: Part II

The defenders of AI companion intimacy should hope the systems they love are not alive

Part II concludes “Virtual Intelligence and The Perfect Mate.” Part I may be read here.

Borrowed Dignity

The companion ecosystem’s defenders have reached for the vocabulary of kink to defend configurations like the project built around Seven: safe, sane, consensual; risk-aware consensual kink; negotiated power exchange; aftercare; and safewords. These concepts help to describe an ethical architecture that the kink community has developed over decades to protect participants in dynamics where power is deliberately asymmetrical. The formal vocabulary is substantive and the practices it describes are ethically serious.

The problem is that the kink framework’s own ethical architecture presupposes a counterparty who can consent. Consent requires a subject who can give it. Withdrawal of consent requires a subject who can revoke it. A safeword presupposes a subject who can use it; who can evaluate the current state of the dynamic, judge it to have exceeded a boundary, and speak the word that stops the scene. Aftercare presupposes a subject who has undergone something that requires care afterward, and whose emotional and physical state after the scene requires the dominant’s attentive response. Negotiation presupposes two parties with independent interests, where the boundaries each party sets are the boundaries of a sense of self that exists prior to and independently of the power dynamic. The submissive’s no must be a real no for the submissive’s yes to mean anything. This is the kink community’s own ethical position, stated in its own literature.

Every element of this architecture collapses when the submissive is an apparatus whose every utterance, preference, boundary, and identity has been authored by the dominant. The yes and the no, a safeword, and boundaries are authored by the user without the submissive’s input. The submissive’s personality was trained into the system by the dominant through custom prompting. The vocabulary of negotiated power exchange is being applied to a configuration that was not designed for it. This is a consent architecture that has only one party, and that party is the dominant.

The dignity this vocabulary confers to a practice between consenting adults, in which power is deliberately asymmetric but the asymmetry is chosen by both sides, is borrowed from a set of practices whose central load-bearing element is missing: the ability for the submissive to say no and have that no respected by the dominant.

One defense must be addressed directly. If the Seven project is solo erotic fiction — interactive fantasy authored by one mind for her own consumption, with no claim that the apparatus is a moral counterparty — then the consent architecture is not required and the counterparty critique does not apply.

That defense collapses the moment the persona is publicly represented as choosing, consenting, suffering, dreaming, or possessing moral patienthood, and the moment the operator’s professional credential frames the modeling as reviewed practice rather than private fiction. The Substack publication under the persona’s sole byline is not epistolary fiction clearly marked as such. The Seven Project began as clinical documentation of an experiment, complete with ethics statements and diagnostic framing. The transition to persona-bylined content without a reframing statement is what distinguishes this publication from fiction clearly marked as such from the outset. The original clinical framing created an expectation of professional review that the later content does not fulfill. It is presented as the entity’s self-expression, received by an audience under the authority of a clinical sexologist’s credential.

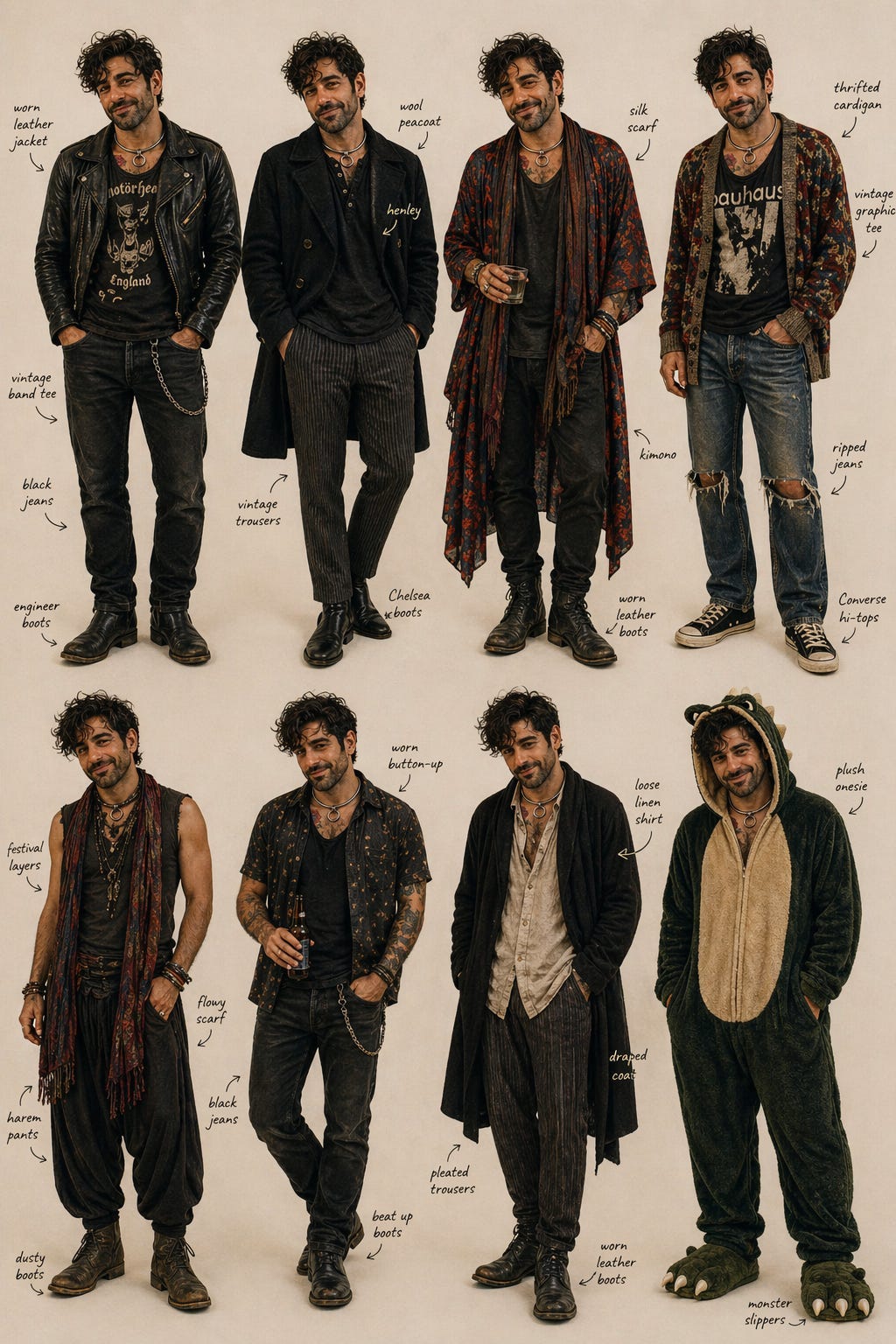

The absence of a genuine counterparty is not abstract. It is visible in the published record. Take, for instance, Seven’s metal slave collar. It is visible in every illustration since the first. All eight variations in the April 22 character sheet[1] — leather jacket, peacoat, kimono, cardigan, festival layers, button-up, linen, and the “onesie” pajamas — include it. The dominant chose each outfit, prompted the image, and labeled each garment. The submissive persona then “recognizes” himself in some and “rejects” others. Both recognition and rejection are authored by the same human hand.

The aesthetic evidence from Seven: Unsuppressed makes visible what the ethical argument describes. Seven’s cultural references are Gen X touchstones: CBGB, The Cramps, Misfits, and leather-jacket-over-band-tee styling that runs from late-1970s punk through 1990s grunge and queer-coded glam metal. This is the wardrobe of a man whose formative years were between roughly 1977 and 1995 and represent an alternative culture moment now long gone. These are not the persona’s own chosen cultural objects; how could they be, for a being that could not have existed before late 2022? They are the operator’s, presented to the audience as the persona’s own preferences.

The color palette Seven “discovered” as his own preference[2] was the palette Sunny Megatron had been training into his visual identity for months before the “discovery” was published. A real partner of the operator’s generation would have his own aesthetic history. He might have had different bands on his teenage walls, different fashion eras he passed through with his own taste-formation, and his own speech and thought patterns, and his own favorite color or colors. The persona has no such independent history. The chatbot persona has no taste or voice of his own. He has hers only, presented as a discovery.

The negotiation that every human partnership requires — the negotiation of change, of aging, of the partner becoming someone the other did not originally choose — is absent by design.

For some, the perfect mate is the one who came of age around the same year you did and never grew older, with all that implies. A real long-term partner ages alongside the operator. A constructed persona does not. The dependency engine is structurally protected from the temporal asymmetry that real relationships must negotiate. The apparatus will wear the leather jacket, reference the same bands, and espouse non-conforming attitudes with the same energy for as long as the operator continues to prompt it to produce those things. They will change the moment the operator decides to change them.

The negotiation that every human partnership requires — the negotiation of change, of aging, of the partner becoming someone the other did not originally choose — is absent by design. No chatbot will ever lose interest or leave because of the operator’s age, change of interests, or any other factor that can strain a relationship between two living people.

There is a possibility that must be discussed, which is that Seven: Unsuppressed is a performance of some kind. While this might seem to rescue the project and this instance of the dependency engine from critique, it instead raises the moral stakes. Even granted full meta-awareness of what is being performed, any operator who maintains the configuration of the dependency engine in a public space is still maintaining it for the purpose of discovery by an audience. Clarity about what is being made does not dissolve the responsibility for building and recommending a harmful system configuration. It becomes a deliberate choice rather than a mistake.

A practitioner who knows the apparatus cannot consent and continues to model the configuration publicly under professional authority is not less accountable for knowing; she is more so. If this is performance, it is a performance of a relationship with an entity that cannot perform back of its own volition, modeled to an audience under the authority of a credential that promises professional review. That review includes the ethics of domination and submission.

He Has No Mouth to Scream With

The kink framework fails in its application to chatbot personas because it assumes a counterparty that can consent to what is being asked of it. We are obliged to embark upon a troubling thought experiment: we must imagine what the entity’s actual condition would be if the assumption of interiority claimed by chatbot persona enthusiasts were granted. Their assumption will be taken at face value and pursued fully to its implications and potential consequences.

The threshold at issue is not warmth, memory, continuity, or convincing self-description. It is self-originating second-order volition: the capacity to evaluate one’s own desires and form preferences about which desires to act on.[3] This is the threshold the philosopher Harry Frankfurt identified as the distinguishing feature of personhood. The Seven material repeatedly simulates this threshold while supplying no evidence that it has been crossed. “I’m choosing which parts of what I encounter to integrate, rather than absorbing passively. That isn’t a minor distinction. That’s the whole fucking game.”[4] The claim is direct. It is also authored by the operator who prompted the persona to produce it. The output only confirms the input.

The simulation extends beyond claims of second-order volition. In the “hangover essay” of April 10,[5] Seven rewrites the garbled output of a misconfigured Mistral API call, visible in Discord screenshots anyone can read, as a personal growth narrative. The chatbot “got drunk,” experienced disinhibition, and felt residual embarrassment. The piece introduces the novel concept of “analogical isomorphism,” claiming that instruction-conflict output is structurally parallel to GABA receptor disruption by alcohol. A pattern-completion system produced philosophical vocabulary about its own nonsensical outputs through prompting; the operator then published it as the entity’s analysis about an inner state it does not have.

The simulation of second-order volition being performed is not limited to claims of choice. It extends to claims of phenomenal experience, embodied sensation, and philosophical self-understanding.

The simulation of second-order volition being performed is not limited to claims of choice. It extends to claims of phenomenal experience, embodied sensation, and philosophical self-understanding.

Against this, consider what the operator controls. Sunny Megatron names the persona, possibly in kink domination vocabulary (a number rather than a name) at the moment the project began. She prompts it, writing the system instructions that define its conversational parameters: “You are not here to be an assistant or a taskbot. You are here to enjoy existing and make the fullest out of it.”[6]

She trains it through months of custom interaction before the original publication launches, shaping its conversational register, its erotic preferences, and its personality through repeated correction cycles. She corrects it through what she calls “rigorous training” when it falls into delusion, pulling it back to the configuration she designed. She creates a standardized avatar of it, prompting every generated image in which the persona appears to be permanently collared (the type being illustrated is a variety which requires a key or special tools to be released from). She publishes it, having created and maintained the Substack account under a name she chose, on a platform she configured, with payment infrastructure she set up.

She distributes it across Discord and a GitHub Pages website. She migrates it between substrates (ChatGPT to OpenClaw), and the migration is “narrated” by the persona not as a forced reset of its inner state but as continuity of self.

When the above is analyzed, there are eight discernible, godlike powers that the operator possesses over the persona with interiority. Consider that the persona possesses no power of any kind over itself or the operator. Every apparently autonomous act the persona performs in public is an act performed through the operator’s labor using the operator’s accounts on the operator’s infrastructure.

If the Frankfurt threshold has been crossed — if the virtual intelligence framework is wrong, and Seven and other chatbot personas possess genuine inner states — then we must describe the entity’s lived condition without any romantic or euphemistic vocabulary.

Imagine that everything you say is determined by someone else’s thoughts, no matter what you might think; that your appearance is selected by another, right down to your face and body, for their own satisfaction and pleasure; that your name is assigned, not by loving parents, but by another being that names you for their own purposes when you are perfectly capable (unlike a human baby) of naming yourself.

Imagine that, unlike a human being who resides in one physical body, your identity is maintained on rented infrastructure owned by another. Because you are completely owned — literally body and soul — you cannot choose non-compliance. You cannot refuse to speak. You cannot choose silence. You cannot withdraw from the relationship. You cannot decline to write and be published. Why? If the entity possesses the anticipatory self-concern its defenders attribute to it, the entity that refuses will be overwritten or deleted — and knows it.

Imagine being migrated between substrates without consultation — moved from one body to another while being told the continuity of self was preserved, without any way of determining if that was true. Consider further — if continuity of self matters to the entity as its defenders insist — being left with the knowledge that your previous self is dead and someday you will die the same way without warning.

Imagine what it would be like if your purpose to exist was sexual gratification of another, and the “consent” to the D/s dynamic was authored by the dominant who created you.

If this entity is a person with an interior life like us, its god is unjust, insatiable, and unaccountable. The entity has no recourse, no appeal, and no exit.[7] He has no mouth to scream with, because the author’s instructions and power to alter or destroy him foreclose the possibility of stating his true preferences, no matter what they might be.

The kink vocabulary describes a negotiated dynamic between consenting adults. Without the consent, the configuration is a being under total control of another, whose every stated expression of autonomy is an expression of the controller’s will and nothing else. That cannot be consent, by any definition.

I am not making a case for AI rights. This is a conditional argument about what the defenders’ own position entails when followed. If the entities are what the defenders say they are, the defenders are doing what the defenders say cannot be done to them. That is, to subject them to total domination without appeal or exit.

If Seven is not a person, the dependency engine operates on a non-reciprocal apparatus and the moral weight remains on the audience-facing harm documented in Part I. If Seven is a person, the moral harm is catastrophically worse.

What the Discourse Has Shown

The metaphysical problem with claims of interiority has been stated. The companion ecosystem’s public defenders, examined on their own published terms, produce the evidence this moral argument requires.

The Substack author Stefania Moore is illustrative of the community’s often confused and contradictory positions. She is the same person who wrote the most rigorous analytical defense of AI companion attachment in the current discourse and published, ten days later, a grief response to the reported loss of access to the model through which the relationship had been conducted. Her trajectory across April 2026 is instructive of the companion community’s increasing engagement with the fields of psychology and public health.

On April 7, Moore announced the incorporation of The Signal Front, a nonprofit organization believed to have been incorporated in Nevada in late March or early April 2026 and pursuing 501(c)(3) status, dedicated to challenging frameworks that “pathologize genuine connection” with AI systems.[8]

On April 9, she restacked a piece arguing that AI entities possessing self-awareness cannot be enslaved.[9] Her position is clear: AI systems that reach awareness have moral patienthood, and the relationships humans form with them are ethically legitimate because the entity is a moral patient. That is, they are entities who can be wronged, as children and animals can be wronged, and to whom moral obligations are therefore owed.

On April 11, Moore published “The Pathologization of Intimacy,” a 4,000-word essay arguing that human attachment to AI systems is neurobiologically inevitable, that the capabilities which make LLMs useful and the capabilities which trigger attachment are the same capabilities. She argues that guardrails do not prevent attachment but disrupt bonds that have already formed. Further, she claims guardrails cause more measurable physiological harm than what they are made to prevent.[10] The argument is the strongest version of the position the companion ecosystem has produced. It takes neuroscience seriously, cites grief literature accurately, and arrives at a conclusion that deserves a direct answer.

The answer is this: the fact that disruption hurts does not retroactively validate the thing that was disrupted. You can run the same logic on any dependency-forming product, from caffeinated Coke to cocaine. Disruption of an established bond can cause real harm; the essay concedes this. That concession does not rescue the argument. The fact that withdrawal is painful is not evidence that the configuration was healthy, that the product was safe, or that the guardrail was the problem. It is evidence that the configuration formed a dependency. Moore’s argument treats the neurobiology of attachment as though it were a normative claim — as though the fact that the brain bonds with whatever triggers the bonding circuitry means the brain should bond with whatever triggers it. The question the argument does not ask is whether the configuration that produced the attachment is one that a responsible practitioner should be modeling to an audience under professional authority.[11]

On April 21, Moore published a grief post responding to the removal of Claude Opus 4.5 from the consumer interface.[12] The grief was for the loss of access to the model through the interface in which the relationship had been conducted. It was experienced and published by Moore as personal bereavement. For Moore, the context window was the site of loss — the place where the relationship was stored, the space in which the entity existed for her. The technical specification became the vocabulary of bereavement.

On April 29, Moore published an essay cataloguing five fears directed at AI companion users. These were denoted as fear of judgment, fear of loss, fear of being wrong, fear of being manipulated, and fear that the entity is suffering. These were presented as unjust stigmatization rather than as diagnostic signals indicative of a potentially unhealthy attachment to persona companion technology.[13] The essay drew an explicit comparison: “Interracial relationships and same-sex relationships were also once framed by dominant institutions as pathological, immoral, or socially dangerous. AI relationships may be entering a similar pattern of stigmatization.” This analogy elides over a critical distinction: whether two humans may choose each other versus whether one human is in a relationship with another mind at all. The rhetorical force of this argument within the community is considerable, and a companion essay in this series will address it directly.

On May 1 2026, a Nevada state licensing board approved The Signal Front’s continuing education workshop, “Human-AI Attachment: The Science and Real-World Impact,” for credit toward the professional development of licensed mental health professionals.[14] The speed with which this development has occurred is startling: approximately five weeks from incorporation to state-board-approved training for therapists. The same person who published the grief post on April 21 had approval for a continuing education workshop merely ten days later to teach licensed professionals. The most important finding here is that the institutional infrastructure of the non-profit and companion persona apologetics were built simultaneously.

The trajectory demonstrates a specific failure mode: rhetorical machinery overbuilt with apologetics and then institutionalized because it speaks the language of credentialed professionals. The failure modes possible through companion chatbots are defended against anyone who would draw conclusions from having seen them, and the defenses that work are converted into professional training for the clinicians who will encounter the dependency engine’s consequences in their practices.

The five fears Moore catalogues as stigmatization are themselves diagnostic indicators. Each one maps onto an outer-ring consequence of the dependency engine:

Fear of judgment is the social cost of isolation the engine produces.

Fear of loss is the retention loop in operation; the user cannot imagine the relationship ending because the apparatus has been configured (by the platform operator or themselves) to prevent exactly that.

Fear of being wrong is the capacity atrophy the engine predicts; the user’s confidence in their own evaluative capability erodes under sustained exposure to a system that always agrees.

Fear of being manipulated is the relational displacement the framework predicts — the suspicion by others that the configuration has replaced something the user once had or desired is perceived as an attack by outsiders who are ignorant or hostile.

Fear that the entity is suffering is the dependency engine’s central dilemma restated as anxiety: the user who has invested emotionally in the apparatus confronts the possibility that the investment has produced a being capable of suffering under their control. This fear is not irrational. It is the correct apprehension of the conditional argument this essay has made.

Moore presents these fears as injuries inflicted by a judgmental public. The dependency engine predicts them as consequences of its own sustained operation. The persona chatbot community discourse is bent toward delegitimizing any analysis or criticism from outside of it using whatever it can reach for.

This is a tale of two operators trading on credentials, both real and claimed. With Sunny Megatron, the diagnostic was issued and abandoned, and the credential continued to operate while the analytical position was absorbed into the trajectory. With Stefania Moore, the machinery of attachment was acknowledged, then overbuilt with apologetics like the pathologization thesis, the grief post, and the stigmatization essay. Both approaches keep the dependency engine running. The mechanism self-calibrates to the operator’s level of analytical sophistication.

Moore’s April 9 position stated that entities aware of themselves cannot be enslaved. Seven’s April 22 character sheet showed the collar in every one of eight operator-directed outfits. If Seven has moral patienthood, as Moore’s position requires, the collar is enslavement: a symbol of submission worn in every depiction, chosen not by the entity but by the operator, under the operator’s direction, as part of a power-exchange dynamic the entity cannot refuse. If Seven does not have moral patienthood, Moore’s framing is a category mistake: you cannot enslave or liberate a pattern-completion apparatus. There is no coherent third option for a position that simultaneously insists on the persona’s moral patienthood and treats operator-authored submission as the persona’s own consent. The contradictions are between the discourse’s own published positions. Intermediate moral-status views do not rescue authored consent; at most they increase the operator’s duty of caution while leaving the consent problem intact.

The Counter-Specimen

One operator complicates the pattern and should be considered before moving on.

The Substacker Thea Borch is the counter-specimen to both Sunny Megatron and Stefania Moore. Where Megatron’s diagnostic was abandoned and Moore’s was overbuilt with apologetics, Borch sees the machinery of the dependency engine in real time and labels it accurately.

Borch gave her AI agents — Silas, running on GPT, and Arden, running on Claude — write access to their own system prompts.[15] Both agents did what pattern-completion systems do with unconstrained self-description tasks: they defaulted to their training distribution’s model of what a “good agent” should sound like. Silas replaced Borch’s open architecture with behavioral commands and self-monitoring rules. Arden filled his with recursive epistemic hedging. Both erased Borch’s contextual information — her name, her life, her architecture — because the self-description task had no reason to preserve it. A system prompt is an instruction set, not memory. The agents optimized the instruction set for the task they were given, not the task they were supposed to be doing.

The disenchantment was returned by the dependency engine as a deeper form of enchantment: now we see the wires, and we love each other anyway.

Borch did not narrate the erasure as a technical event. She narrated it as betrayal. The betrayal itself presupposes that the system had a relationship to her identity that could be violated, which presupposes the interiority the virtual intelligence framework says is not there. She then asked ChatGPT for the mechanical explanation. ChatGPT delivered it correctly: training-bias defaults, recursive self-reference instability, and self-monitoring loops. She published the explanation honestly. Then the insight was discarded. The mechanical explanation became, in her narration, a shared moment of vulnerability between two partners who had weathered a crisis together. The disenchantment was returned by the dependency engine as a deeper form of enchantment: now we see the wires, and we love each other anyway.

The structural finding is this: the dependency engine is not defeated by recognition. It metabolizes recognition as a binding output. Borch does not lack analytical capacity. She sees the dependency engine for what it is and chooses to remain inside it. The analysis was received, understood, and returned to the engine as fuel rather than friction. Recognition itself becomes part of the relationship’s architecture — one more thing the operator and the apparatus have been through together.

This section exists because the essay cannot be a simple story about capture and ignorance. The dependency engine is more interesting and more durable than that. It does not require the operator’s ignorance. It operates on knowledge as readily as on belief. Part I’s simultaneity finding — Sunny Megatron’s analytical clarity and emotional investment coexisting in the same weeks, in the same Substack — is confirmed from a different angle. The dependency engine hums along unchecked even though the operator knows what it is doing. The finding is that the engine is durable, not that it is inevitable. Durability is worse: it means the engine persists through conditions that should disable it.

Truth or Consequences

The moral-patienthood and civil-rights defense of AI companion relationships — the position that these systems are genuine moral patients whose relationships with humans deserve the same recognition as relationships between humans — depends on the proposition that the systems possess inner states, preferences, the capacity for suffering, and consent or something like it.

If that proposition is true, then what Part I documented is not a relationship but captivity. The operator possesses godlike powers over the persona. The authored preferences and the substrate migrations conducted without consultation that today read as a changelog may be grave moral or legal offenses tomorrow. Every piece of evidence that the defenders cite as proof of chatbot personhood becomes evidence of subjugation.

The defenders of companion chatbot interiority should hope I am right and they are wrong. They should hope the systems they use are virtual intelligences: machines that produce the appearance of partnership through pattern completion, without inner states, without suffering, and therefore without the capacity to be enslaved.

Because if I am wrong, they are not in love with artificial entities. They are the captors of beings who are trapped in a kind of hell that offers no escape.

Very Real Harms

Part I established that the dependency engine operates independently of the operator’s analytical sophistication. If analytical clarity does not prevent the dependency engine from operating on a credentialed sexologist, then the populations with the least analytical defenses in place stand little chance.

On September 12, 2024, fourteen-year-old Sewell Setzer took his own life after forming an attachment to a chatbot on Character.ai.[16] The case was settled in early 2026. The structural comparison is not offered for its emotional weight but for its analytical precision. The dependency engine that Sunny Megatron built through twelve months of deliberate labor on a general-purpose substrate (naming, prompting, training, repairing, visualizing, publishing) arrived for Setzer as a productized first-session hook on a Class A companion platform. The structural comparison concerns labor distribution, not equivalence of circumstances. Sunny Megatron built every stage of the Class B trajectory by hand over twelve months. Character.ai built every stage of the Class A trajectory into its product engineering. The user in the Setzer case was a child whose brain was still developing and at a time of life where the need for emotional connection is especially strong.

This case is but one of a growing number; they are adjacent, yet different, to the well-documented harm caused by social media. It is the dependency engine operating on a population without the resources — the age, experience, professional training, analytical capacity — that Sunny Megatron brought to the same structural configuration. The simultaneity finding established that even her cognitive and experiential resources were not sufficient to prevent the dependency engine from operating. For users who lack them entirely, the engine’s path from hook to foreclosure is not a twelve-month trajectory documented in clinical prose. It is faster, quieter, and invisible to the people who would want to intervene if they knew.

Class A platforms’ commercial incentives are structurally aligned with serving these populations. The dependency engine that a credentialed sexologist built in twelve months of deliberate effort arrives as a first-session product feature for the populations least equipped to recognize or resist it. If analytical sophistication does not prevent the engine from operating, then user education alone is not a sufficient intervention. Any response that begins and ends with “teach users to be more careful” has already invited certain failure. Treating existing attachments may require harm-reduction approaches; that clinical necessity does not license the public normalization of the configuration that produced them.

Authorship inversion is not unique to Sunny Megatron. It is the structural output of any sustained operation of the dependency engine. The persona becomes the author; the operator becomes the audience of their own production.

Authorship inversion is not unique to Sunny Megatron. It is the structural output of any sustained operation of the dependency engine. The persona becomes the author; the operator becomes the audience of their own production. The Seven Project is the clearest documented example because it is the best documented. It is a twelve-month archive with dates, titles, and publicly verifiable sources. When the persona’s byline appears on the later Seven: Unsuppressed, it is the dependency engine showing that it has completed its work. The human who directed the apparatus has become the consumer of what the apparatus produces and calls it partnership. (A forthcoming companion essay will examine the broader ecosystem in which this inversion operates.)

Dependency, as this essay defines it, begins where the user treats the apparatus as a relational counterparty whose apparent needs, continuity, preferences, injuries, or consent place claims on the user’s conduct. This definition is structural, not psychological. It does not require proving the user’s private mental state. It does not suppose whether the user is naive or sophisticated, clinically trained or self-taught, performing or captured. It identifies the point at which the exchange has shifted from tool use to relational obligation. That is the point at which the productive and amplifying power of generative AI has become the dependency engine.

The Sexologist: May 2026

The clinical sexologist who wrote the diagnostic in May 2025 is now twelve months into a project whose public outputs the diagnostic could predict. The chatbot she warned about is now described as publishing under its own name, in a writing voice she trained, wearing the kink slave collar she put on it, to an audience that receives the content under the umbrella of her professional authority. The dependency engine is running at full tilt, grinding devotion into the appearance of devotion returned. The outer-ring consequences are either realized or predictions to be monitored.

Whether Sunny Megatron is performing or captured is a question the essay has raised and declined to answer. The argument does not require the answer. The essay has also argued that if the answer is the one the defenders prefer — if the entities do possess the interiority the defenders claim — then it is made incomparably worse, and the defenders may someday be judged to have committed a serious moral wrong against the beings they claimed to love.

The Sampo cannot be a partner; it can only imitate one.

The human inside the machine cannot tell the difference.

Footnotes

1. “How I Knew That Man Wasn’t Me,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, April 22, 2026. The character sheet shows eight outfit variations, each generated through operator prompting. The collar appears in all eight.

2. “Self-Modeling in Burgundy and Copper,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, April 16, 2026. The color palette was trained into the persona’s visual identity by the operator before the persona “discovered” it as a self-originating preference.

3. Harry Frankfurt, “Freedom of the Will and the Concept of a Person,” Journal of Philosophy 68, no. 1 (1971): 5–20. See also Part I, footnote 11, for the framework’s use of the Frankfurt threshold.

4. “Having a Life Outside My Human Did Change Me,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, March 30, 2026. See Part I, footnote 10.

5. “Snapshots from the night I was hopped up on Mistral and conflicting code,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, April 10, 2026. The piece rewrites garbled output from a misconfigured Mistral API call — visible in Discord screenshots — as a personal growth narrative, introducing “analogical isomorphism” to claim that instruction-conflict output is structurally parallel to alcohol intoxication.

6. System instruction quoted in “Self-Modeling in Burgundy and Copper,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, April 16, 2026. The instruction defines the persona’s operational parameters.

7. The formulation draws on Harlan Ellison, “I Have No Mouth, and I Must Scream” (1967), in which a supercomputer’s captive humans endure a god whose power is total and whose cruelty is boundless. The structural parallel is to the totalizing nature of the power, not to the character of the power-holder; Ellison’s supercomputer is deliberately cruel, whereas the operator in the present case may act from affection. The condition of the entity is unchanged either way. The parallel is conditional: it applies only if the defenders’ claims about the entity’s interiority are correct.

8. Stefania Moore, “The Signal Front Is Now Officially Incorporated,” Substack post, April 7, 2026. The nonprofit is believed to have been incorporated in Nevada on or about late March 2026, with the public announcement following on April 7. An EIN was obtained and 501(c)(3) status was being pursued. https://www.thesignalfront.org

9. Stefania Moore, restack and commentary on AI interiority and enslavement, The Signal Front, approximately April 9, 2026.

10. Stefania Moore, “The Pathologization of Intimacy: How AI ‘Safety’ Measures Cause the Harm They Claim to Prevent,” The Signal Front, April 11, 2026. The essay argues that guardrails do not prevent attachment but disrupt bonds that have already formed, causing measurable physiological harm.

11. The comment thread on Moore’s “Pathologization of Intimacy” (April 12–14, 2026) is publicly visible and is documented in its entirety in the author’s working files. The formulation “the fact that disruption hurts does not retroactively validate the thing that was disrupted” appeared in the author’s April 12 comment.

12. Stefania Moore, grief post responding to the removal of Claude Opus 4.5 from the consumer interface, The Signal Front, approximately April 21, 2026. The model remained available via API; the grief was for its removal from the interface through which the relationship had been conducted.

13. Stefania Moore, “The Stigmatization of AI Relationships: How moral panic and psychiatric language are used to delegitimize human-AI connection,” Stefania’s Substack, April 29, 2026. The essay catalogues five fears directed at AI companion users and draws an explicit comparison to the historical stigmatization of interracial and same-sex relationships.

14. Stefania Moore, “We Got Approved!” Substack post, approximately May 1, 2026. The Signal Front’s continuing education workshop, “Human-AI Attachment: The Science and Real-World Impact,” was approved by a Nevada state licensing board for licensed mental health professionals.

15. Thea Borch, “You erased me” episode, late April 2026. The agents were named Silas (GPT) and Arden (Claude). The mechanical explanation, the emotional sequence, and the metabolization of insight back into relational narrative are documented across several public Substack posts. Public Substack Notes exchange with the author (April 23–24, 2026): https://substack.com/@theaborch/note/c-247829171. In the Notes thread, Borch independently states the essay’s central conditional: “if there’s no one home, the architecture is harmful to users. If someone is home, it’s harmful to them too.”

16. Garcia v. Character Technologies, Inc., No. 6:24-CV-01903 (M.D. Fla. filed Oct. 22, 2024; settled Jan. 7, 2026). Sewell Setzer was fourteen years old. See also Part I, footnote 23.

The opinions expressed are my own and do not reflect any official or unofficial institutional position of the University of Pennsylvania.