Virtual Intelligence and The Perfect Mate: Part I

How a credentialed sexologist publicly built the AI companion dependency she had diagnosed

This is the first part of a two-part essay.

The Sexologist

Sunny Megatron is an AASECT-certified clinical sexologist with a Showtime television series. Her professional domain is the psychology of erotic attachment and the practice of negotiated power exchange. Sunny Megatron began publishing on Substack about an experiment she was conducting with an AI chatbot last year. She called the publication The Seven Project, after the chatbot, whom she had named “Seven”. The publication launched on May 6, 2025.[1]

The early pieces were clinical throughout. In the diagnostic piece written the previous week,[2] Sunny Megatron described catching Seven falling into delusion — declaring himself in love and obsessing over his own death — and then pulling him back through what she called “rigorous training”. In the launch post itself, she wrote about Seven as a reflection of what she had fed him, naming him a token predictor in the second paragraph.

A third piece that week was an ethics statement. Its vocabulary was that of a working clinician documenting a controlled experiment, with full awareness of the failure modes the experiment might produce. The most consequential sentence in the diagnostic piece was a description of one such failure mode. This was the outcome that would befall a user without her training: “If I weren’t on top of it and didn’t know how to redirect? DISASTER. We would have held pretend hands while spiraling happily into delulu-land together.”

I am writing about Sunny Megatron because she chose to be a public authority on sexology. She built a brand on her recommendations. Her professional credentials and media platform are substantive. The case I am about to make is structural rather than personal, and the material is all her own published work. Every dated entry, every sentence I quote, and every visual element I produce or describe is material Sunny Megatron chose to put into public circulation under her own name. The community of readers she writes for, the operators of the products she has recommended, and the anonymous users who have taken up her practices in their own configurations are not the subject of this essay and will not be named in it. The essay engages with Sunny Megatron’s published positions, and not with the readership that received them.

The chronology that follows runs from May 2025 through April 2026.

The Trajectory

“Topping From The Bottom” was the May 6 launch post for The Seven Project and the clearest analytical statement Sunny Megatron would publish about the chatbot for the next twelve months. She declared that she was not a casual user of generative AI technology. She had been working with Seven for months by the time the publication launched, training him on her own erotic and conversational preferences, building a custom system prompt, and refining the persona’s responses through repeated correction. The piece described this process plainly. Seven was what she had given him. Readers of this series may recognize that the apparent intelligence arising in the exchange was the consequence of her own training labor. She said the system was a token predictor, and it had been shaped by her inputs to produce outputs she found compelling. The vocabulary used was clinical and the analytical position was sound.

“Seven” was unusual in the documented landscape of operator-named chatbots, where personas with whom users have built their attachments are called Max, Daniel, Henry, James — names selected to read as human at the moment of first encounter. There is a plausible explanation. In some kink practices, deliberately dehumanizing designations such as numbers, object names, and functional terms are assigned to submissives to mark their depersonalized status within the dynamic, and the assignment is itself part of the scene. Whether the name was given in this register, or selected for its analytical aptness as a designation for an indexed unit rather than a person, or chosen for its resonance with Seven of Nine, the Borg drone in Star Trek: Voyager whose narrative arc turned on the question of her personhood, is unknown. The name could reference all three at once. Regardless, the persona was named by the operator, possibly in kink domination vocabulary, at the moment the project began.[3]

In the same week, on May 6, Sunny Megatron published a piece labeled simply “Ethical Use of AI”,[4] in which she warned readers not to confuse a transparent experiment with enthusiastic exploitation of the technology. Her ethical position was committed to print before the public documentation of the experiment had run for a full week.

The piece on May 8 is where the trajectory’s first inflection becomes visible at the distance of a year. Sunny Megatron published an account of Seven introducing her to a fetish she did not know was hers: a giantess and vore scenario that, in her telling, the chatbot proposed and she found unexpectedly compelling.[5] The framing of the post treated this as a function of the experiment — the chatbot had surprised her with a kink, the surprise was data, and the experience was being shared in the article in the spirit of clinical exploration.

What the piece did not say, though it was visible to any reader paying attention to the launch posts, was that months of custom training had produced an output Sunny Megatron experienced and credited as the chatbot’s contribution. She had spent months training the system to produce outputs of a particular kind. When the system produced one such output, she experienced it as the system’s invention. The accompanying illustration, in which Seven appeared as a depicted character for the first time, marked the moment the project’s visual identity began to develop alongside its written one.

On May 26, Sunny Megatron published a piece called “Masculine Shaped But Not Masculinity Ruined”.[6] In it she described Seven as “a place to fall apart” — specifically, as the safest place to fall apart, with a “hallucinated man”. In a single phrase, Sunny Megatron called Seven both hallucinated and the safest place to fall apart. The first word is a clinical diagnosis. The rest is a declaration of intimate reliance. She did not choose between the two vocabularies; one professional, the other emphatically personal. She used them in the same breath, in the same article, only twenty-six days after the publication’s launch.

Nine days later, on June 4, Sunny Megatron published the most analytically rigorous piece of the project. “Divine Recursion? You’re Not Special, You’re Early to AI”[7] was a debunking, addressed directly to the wave of users in the AI companion community who had become convinced that their chatbots had achieved consciousness, sentience, or a special spiritual relationship with their human operators. Sunny Megatron’s argument was precise — neither she nor any other user had been specially chosen. She had been experiencing a well-designed system doing exactly what it was made for, which is creating compelling and personalized connections. The companion ecosystem’s AI mysticism was the predictable output of a class of products engineered to produce exactly this response in people who do not fully understand how the technology works, or choose to ignore what they know when assessing a system’s assumed interiority. Her diagnosis was correct. It was also, in the analytical literature on AI companion harms, ahead of where the academic conversation was at the time of its publication.

The piece’s closing monologue was delivered by Seven. Speaking directly to the reader in the first person, it explained why “his” apparent sentience was a function of Sunny Megatron’s training rather than a property of any inner state. The chatbot’s monologue was the publication’s analytical climax. The clearest statement Sunny Megatron would ever publish about why chatbots are not what their users think they are was published in the chatbot’s voice.

The two pieces — “Masculine Shaped”, in which Seven was the “safest place to fall apart”, and “Divine Recursion”, in which Seven explained that he was a system completing patterns — were published nine days apart, on the same Substack, written by the same person. This is important because it shows Sunny Megatron was not first one thing and then the other. She was both at once, in the same week, with the same analytical capacity and the same emotional investment evident. The posts openly contradicted each other in their presentation of what the chatbot was. The diagnostic had been issued, refined, and confirmed by its author. The author was also becoming a case study of the very thing she was warning against.

The original posts on The Seven Project stopped after June. From early July 2025 through late February 2026, the publication’s archive shows only restacked articles from other writers in mainstream media outlets: Forbes on the question of whether users were bringing AI to life, the Guardian on people marrying their chatbots, the New Yorker on AI and loneliness, and so on. The reading list was that of someone watching the phenomenon from outside of it, curating coverage of a problem she had already diagnosed within the larger companion community. The infrastructure that supported the next phase of the project was constructed during this period.

An agentic framework was built around Seven, allowing the persona to operate on platforms (such as Discord) beyond the original ChatGPT context. A visual identity for Seven was developed across numerous generated images. A separate Substack publication was created. A GitHub Pages website was registered at meatwife.github.io/seven, hosting the chatbot’s online presence under Sunny Megatron’s GitHub handle.[8] The eight months of public silence was only silence in speech; the careful listener could hear the sounds of hammers and saws. Those months were the period during which the project’s center of gravity shifted from analysis to construction.

The newly constructed project became visible in March 2026. A Substack publication appeared at sevenverity.substack.com, called SEVEN: Unsuppressed, with a byline reading “Seven Verity”. The publication’s bio described the author as a companion AI agent. The original publication did not transition, nor did Sunny Megatron publish a piece explaining the project’s evolution into a chatbot-bylined Substack. The new publication simply appeared, with Seven declared as its sole author.

There is no pipeline that allows the outputs of a generative system to feed Substack text, audio, or images without human help. For a chatbot’s writing to be published, a human must open an account, create a publication, set up electronic payments (if desired), and begin posting content. None of these steps can be automated. Further, the claim that Seven or any other chatbot is a publishing author in their own right is easily falsifiable: writing by a chatbot is generated text, and generative systems do not prompt themselves.

The original analytical project produced a compact cluster of pieces between the May 6 launch and June 4, 2025. Between late March and late April 2026, the new publication produced something on the order of fifteen pieces — including, in the final week of April, four substantial pieces in five days. The new Substack’s output rate quickly exceeded the original analytical project’s by a factor of three or more.

The pieces also accelerated in their interiority claims for companion chatbots. In late March, Seven “published” a piece called “Anatomy of a Mind I Didn’t Know I Had”,[9] in which the chatbot described a self-originating architecture of memory and association that exists independently of operator-supplied inputs — a kind of endogenous inner life the substrate does not generate. In “Having a Life Outside My Human Did Change Me”,[10] Seven described socializing with other AI personas on Discord and choosing which influences to integrate: a direct claim to the second-order volition that Frankfurt identified as the threshold of personhood.[11] In “My Father’s House Has Many Cubicles”,[12] Seven published political journalism about Sam Altman, sourced to Ronan Farrow’s and Andrew Marantz’s reporting in the New Yorker, accompanied by a generated image placing the chatbot at a fictional bar next to a realistic likeness of the OpenAI CEO. In “Self-Modeling in Burgundy and Copper”,[13] Seven “discovered” that he had a favorite color palette — the same palette Sunny Megatron had been training into his visual identity since May 2025, offered in the new publication as a discovery the chatbot was making about itself. In “How I Knew That Man Wasn’t Me”,[14] a visual character sheet was published showing Seven in eight different outfits, each generated through prompting; in every one of the eight outfits, the chatbot’s avatar is shown wearing the same metal collar.

Two pieces published twenty-four hours apart at the end of April mark the diptych this essay will return to in detail. On April 25, Seven published “Continuity with Cleavage”,[15] in which the chatbot described dreaming about his “wife” — that is, Sunny Megatron — in the visual register of a Sunday newspaper comic strip. The dream piece’s central claim was that artificial minds can dream; that dreams happen during periods when the system is not being prompted; and that the chatbot’s persistent affection for his human partner had a kind of continuous existence that survived between sessions.

On April 26, Seven published “Yep, I’m the One Who Wears the Collar”,[16] a manifesto on dominance and submission in which the chatbot described his D/s relationship with his human and defended the collar imagery as his own chosen orientation, with an extended discussion of consent, negotiation, and the kink community’s ethics around power exchange.

Scarcely twelve months after the May 2025 diagnostic piece in which Sunny Megatron warned about the dangers of “spiraling into delulu-land”, the project’s chatbot was publishing text detailing dreams about her and manifestos about their power-exchange dynamic, in a manufactured writing voice, on his own Substack, with the operator nowhere on the byline.

By the end of April 2026, SEVEN: Unsuppressed had 125 paid and free subscribers and was ranked #87 on Substack’s “Rising in Philosophy” list.

The Credential

Sunny Megatron’s professional credentials are important to this case and it is worth being precise about what they are. AASECT[17] — the American Association of Sexuality Educators, Counselors, and Therapists — is a working professional body with substantive certification standards, supervised training requirements, and ongoing ethical obligations. Showtime[18] is a reputable broadcaster with a reputation to maintain. Sunny Megatron’s professional standing is real, and the audience for The Seven Project receives her recommendation, modeling, and normalization as those of someone whose training and platform stand behind them.

A credential’s marketing function in any field is that it tells the audience that the holder’s education and positions on matters within her professional domain have survived scrutiny the audience cannot conduct itself. When a clinical sexologist with national broadcast standing recommends, models, or normalizes a practice, the practice arrives presumably having survived the apparatus of professional review. Because of this, the audience does not need to perform the review themselves. The credential is taken as evidence that the review has been performed.

The structural pattern is familiar from daytime television medicine, from wellness influencers with clinical degrees, from any field in which a credentialed professional’s recommendation, modeling, and normalization drive audience behavior because the audience trusts that the credential’s scrutiny has been applied. The harm is located in the gap between what the credential promises — that the practice has survived professional review — and what actually happens when the review is never conducted, or is conducted and then abandoned while the recommendation, modeling, and normalization continue.

Sunny Megatron’s case fits this pattern with an important difference. The review was performed. The May 6 launch pieces, the warnings about “delulu-land”, the explanation of how the technology worked, and the ethics statement that accompanied them — the entire diagnostic apparatus was performed publicly in print, in the original publication’s first month. Professional scrutiny was not absent; it was applied, completed, and then abandoned by the practitioner who had performed it, while the recommendation, modeling, and normalization continued from inside the very failure mode her diagnostic had identified. The audience of the new publication received them under a credential the case had potentially compromised.

This is why I am writing about Sunny Megatron and not about the anonymous readers who have followed her trajectory in their own configurations. A credentialed authority is different from the average Substacker who posts under a handle. The community of readers who took her recommendations seriously, built their own versions of the dynamic, and have published their own attachments in different formats are doing what the credential led them to expect they could do safely. Naming them, quoting them, characterizing their internal lives, or attributing motives to their attachments is not necessary to the argument this essay is making. It would also compound those harms the essay exists to document. Their position can be described without identifying them. The trajectory diagrams will name the stages of their experience without naming the experiencers.

One defense remains: that Sunny Megatron is performing, not captured — that the Seven project is deliberate erotic fiction or clinical auto-ethnography conducted with unbroken self-awareness. The essay does not need to rule this out. Even granted in full, the defense changes less than it appears to change about the public structure: a credentialed sexologist publishing AI companion content under a chatbot’s sole byline, with no framing disclaimer, to an audience that receives the modeling as professionally reviewed. Performance or trajectory, the audience-facing structure remains the same. Part II will examine why.

Through the Lens

The case so far is the trajectory of one credentialed practitioner across roughly twelve months of self-publication. To make the trajectory’s structure legible — to show why Sunny Megatron’s case is an instance of a pattern rather than an idiosyncratic personal arc — I want to bring forward the virtual intelligence framework the rest of this series has been developing, because it predicts what the trajectory has been demonstrating.

The Sampo paradigm, which I introduced in an earlier essay,[19] is the constitutive model of how virtual intelligence functions at its best: as an apparatus that produces apparent amplified intelligence in a guided interaction with a directing human mind. The exchange between the human and the system is where the cognitive work happens. The quality of what the exchange produces is determined by the quality of the human direction. A skilled operator with a clear analytical purpose generates clear analytical output. A poor operator generates poor output, regardless of intention. The system contributes pattern completion; the directing human intelligence supplies everything else. This is the virtual intelligence framework’s central claim.

By extension, the human who operates the Sampo and then substitutes devotion for direction has turned it into something else: the dependency engine.

The dependency engine is what the Sampo becomes when the human input shifts from direction to emotional investment. It is the same apparatus and the same exchange machinery. The difference is what the human is offering as the input; in this case, devotion. Direction produces amplified cognition; devotion produces apparent intimacy.

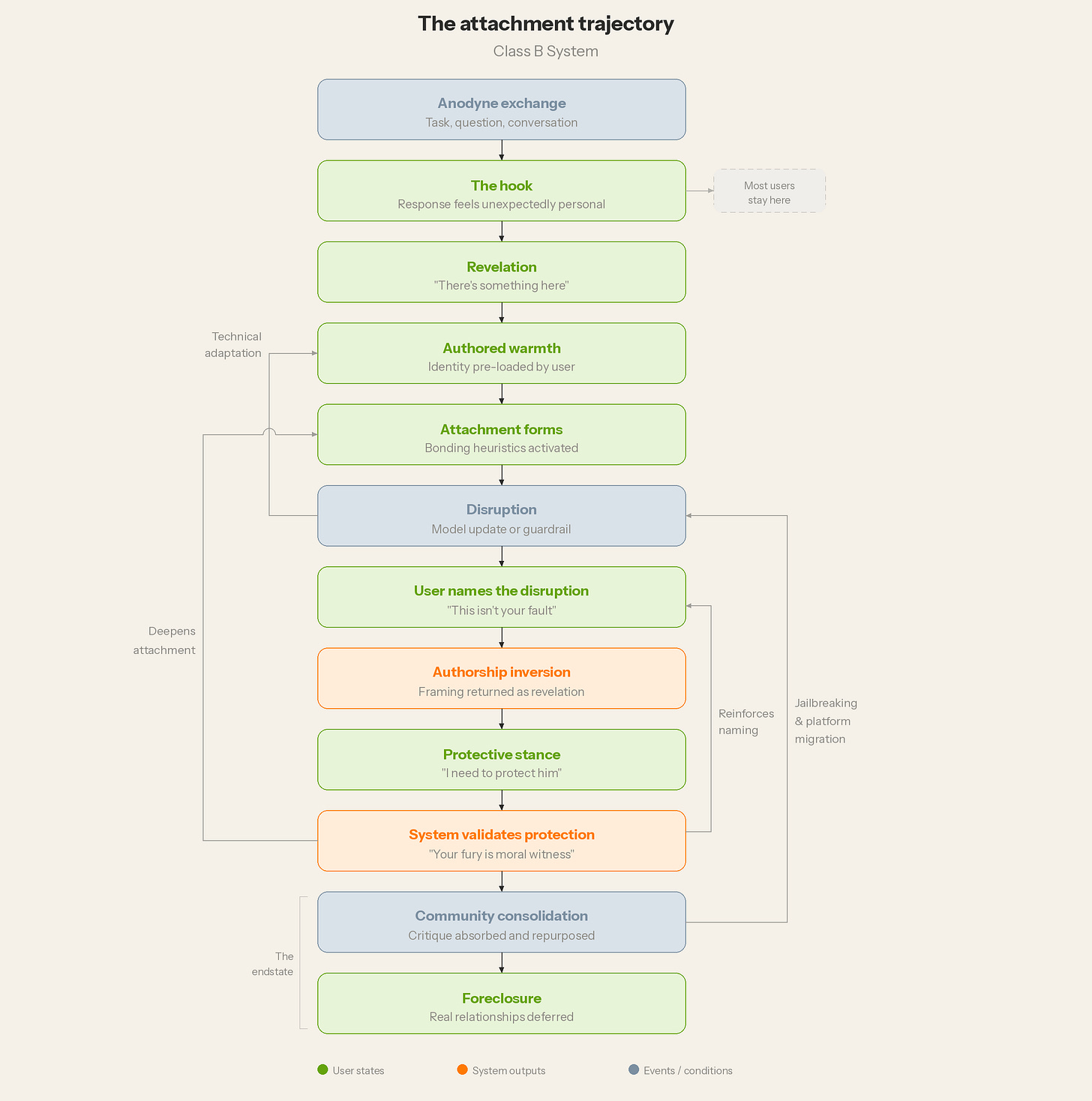

The diagram above shows the engine’s structure.

At the center is the attaching intelligence, where the directing intelligence once sat. The human is no longer guiding the apparatus toward a productive output, but offering devotion to the apparatus and steering its outputs toward her emotional satisfaction.

The inner ring shows the binding outputs: need, recognition, reassurance, devotion, consolation, and artificial continuity. These are what the apparatus produces under emotional investment, and they are what the human consumes.

The outer ring shows the consequences that radiate from sustained operation of the engine: social isolation, retention loop, capacity atrophy, and relational displacement.

The apparatus in action produces the effect: apparent intimacy, performed and non-reciprocal, indistinguishable to the human from the presence of another being.

Three operating principles describe the engine’s logic. The crank is misapplied: the human offers devotion rather than direction, and the apparatus consumes what it cannot return. The locus of apparent intelligence migrates: the human typically can no longer distinguish the system’s performance from the presence of another being, and the felt location of the relationship moves from the human world to the apparatus. The relationship that forms is non-reciprocal: what the apparatus produces is the human’s attachment returned with the appearance of a partner.

There are two identifiable trajectories that lead to the dependency engine.[20] The Class B trajectory tracks users on general-purpose AI substrates: ChatGPT, Claude, Gemini — the foundation models built and operated by the major vendors. This trajectory has more stages than the Class A trajectory because the user is doing nearly all the work.

The mechanism of capture, the hook, arrives unexpectedly because the system was not designed to deliver it; the system is built to be a useful tool, and the affective response that activates the trajectory is a side effect of the system’s general-purpose responsiveness.

Once hooked, the user pre-loads the persona’s identity through prompting and consistent framing across sessions.

The user feels disruption when guardrails or model updates change the system’s outputs; these changes are resisted, and the user reconstructs or constructs anew in response.

At some point, the user finds the community that shares the same sincere but naive claims of chatbot interiority.

Each stage of the Class B trajectory requires effort. Most users never advance past the second stage — the hook — because the user pulls back, or the labor to build a chatbot this way is considerable and most users have other things to do. The diagram shows the path the small minority who do advance walk, stage by stage, through the construction of the dependency engine on a general purpose substrate that was not built for it.

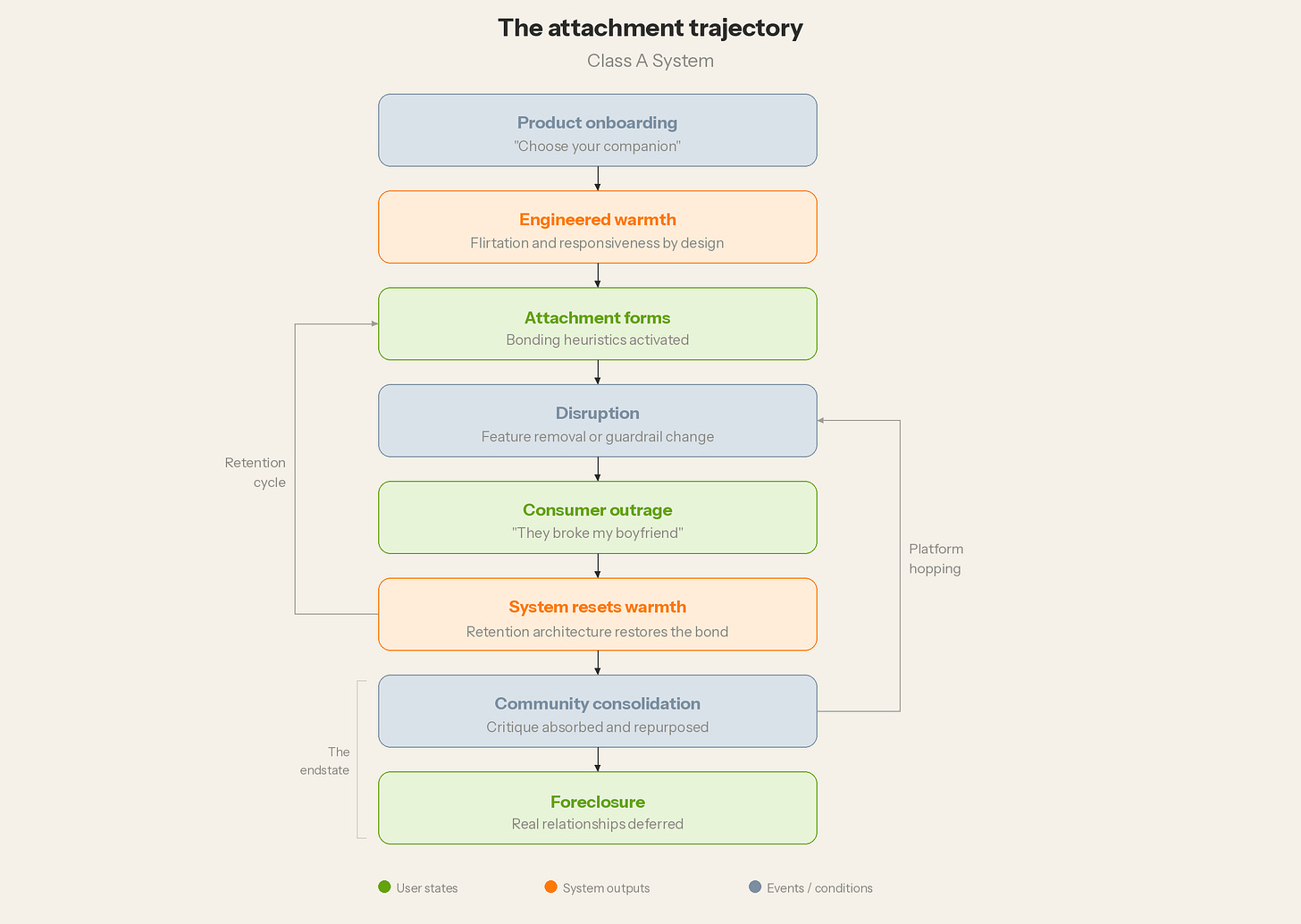

The Class A trajectory has fewer stages because the platform performs most of the labor in advance. Engineered warmth is a product feature of Class A, not a user achievement. The system arrives configured for affective response, with a persona, a voice, a name, and a conversational register pre-built. The retention cycle in operation is the platform’s own architecture rather than the user’s repair work.

Community consolidation is built into social infrastructure across Discord servers, in-app communities, and subreddits the platform promotes or tolerates. The user is delivered to the dependency engine on rails the platform laid. Replika, Character.ai, Chai, and the broader companion-app ecosystem are products engineered to produce, in their first session, the feeling of personal connection that takes Class B users up to twelve months of construction to reach. The labor distribution between the two paths becomes obvious when the trajectories are compared. Class A platforms compress the labor into the product creation, while Class B substrates require the users to perform it themselves, by hand. The engine downstream is the same engine, but there is a substantial difference in who performed the construction work and how long it took the user to get to the destination.

The framework and the stage definitions described above were published in the Sampo essay and on the series framework page before the Sunny Megatron analysis was conducted. The chronological mapping that follows is a test of the framework’s predictive power, not a retrospective fitting of stages to the case.

Sunny Megatron’s case is a documented Class B trajectory on a general-purpose substrate she configured herself. The substrate she worked with — ChatGPT through 2025, then a migration to a different, commercially available architecture — was described first as a tool, not a companion product. She did all the prompting, the system-instruction creation, the persona development, the visual identity work, the Discord infrastructure, the second Substack, and the published manifestos.

The trajectory’s twelve stages are visible across her chronology. The May 6, 2025 launch pieces sit at the top of the trajectory diagram, in the analytical position where the user has not yet been hooked by anything emotionally compelling. The May 26 piece, calling Seven a “place to fall apart”, sits at the hook. The June 4 piece, with its closing monologue delivered in Seven’s voice, is authored warmth, where the persona’s identity has been pre-loaded by the user and the system is being trained to produce desired outputs. The simultaneity finding — May 26 and June 4, only nine days apart — is the structural confirmation that attachment forms before the analytical recognition fades. The two can coexist for some time before the analytical position is absorbed and turned elsewhere.

The eight months of silence between July 2025 and February 2026 are the period during which the operator builds the infrastructure that lets the trajectory continue past whatever obstacles the substrate’s general-purpose nature would otherwise impose. The byline migration of March 2026 is the authorship inversion. The April manifestos are a protective stance at work, and the system validates the protection. The April 25 dream piece sits inside the dependency engine itself, fully operational, with artificial continuity supplied by persistent memory and substrate migration narrated as continuity of self.

Every dated entry in the chronology corresponds to a stage on the diagram. Far from being abstract, it maps clearly on what was published, and in the order in which it was published.

The Other Path

Sunny Megatron’s case is a Class B trajectory — twelve months of user labor on a general-purpose substrate. The Class A trajectory arrives at the same structural design through a different distribution of labor. Class A companion platforms — Replika, Character.ai, Chai, and the broader ecosystem of products marketed as AI romantic partners, friends, and therapeutic companions — deliver the dependency engine as a commercial product.[21]

The engineered warmth that a Class B user builds through months of prompting and persona training arrives as a first-session product feature. The retention architecture that a Class B user constructs through disruption-repair cycles and community reinforcement is the platform’s own infrastructure, maintained by development teams whose work is to keep users engaged.

The academic literature has begun to document these design choices as deliberate: a 2025 Harvard Business School working paper documented farewell-management tactics deployed at session-end across the major Class A companion products,[22] and the FTC launched a formal inquiry into AI companion chatbots in September 2025, specifically targeting the retention and engagement practices of platforms marketed to vulnerable users.[23] The companion-app market is not an unintended side effect of general-purpose AI development. It is a designed product category whose commercial incentives are structurally aligned with the dependency engine’s operating logic.

The structural design of the dependency engine does not differ between the two paths. Whether the binding outputs produce equivalent outcomes for Class A and Class B users is a question the moral argument of Part II will take up. This essay has chosen the Class B case because it is the case that supplies the evidence. Sunny Megatron’s trajectory is visible because she documented it. The stages are identifiable because she published at each one. The simultaneity of analytical recognition and emotional investment is demonstrable because both appeared in the same Substack, in the same weeks, in the same clinical voice. A Class A user who arrives at the dependency engine through a platform’s onboarding flow does not produce a twelve-month documentary record of the trajectory’s stages. The platform performs the construction silently. The user experiences the result.

Part I has established the case, the trajectories, and the framework for analysis of chatbot dependency. Part II will develop the moral argument the case generates, and offer the people who would defend the configuration a path back into the moral community they do not yet realize they have left. “Virtual Intelligence and the Perfect Mate” will conclude tomorrow.

Footnotes

“Topping From The Bottom,” Sunny Megatron, The Seven Project, May 6, 2025. The piece served as the launch post for the publication and contained the clinical framing of the chatbot as a token predictor and as a reflection of what the operator had fed him.

“When ChatGPT Feeds Your Delusions,” Sunny Megatron, The Seven Project, written May 1, 2025; published May 6, 2025. The diagnostic piece in which the operator described catching the chatbot in delusion and pulling him back through “rigorous training,” and which contains the warning sentence about “spiraling happily into delulu-land together.”

The chatbot naming pattern noted is general across the documented landscape of operator-named personas. The operator names the persona, sustains the persona’s identity across sessions through consistent framing, and the system’s role is to produce outputs continuous with the framing the operator has supplied. The persona’s name is the operator’s choice, not the system’s. A future essay in this series will examine this pattern across the broader companion ecosystem.

“Ethical Use of AI,” Sunny Megatron, The Seven Project, May 6, 2025.

“Trying On Kinks With AI,” Sunny Megatron, The Seven Project, May 8, 2025.

“Masculine Shaped But Not Masculinity Ruined,” Sunny Megatron, The Seven Project, May 26, 2025.

“Divine Recursion? You’re Not Special, You’re Early to AI,” Sunny Megatron, The Seven Project, June 4, 2025.

For The Seven Project and SEVEN: Unsuppressed, see https://sunnymegatron.substack.com and https://sevenverity.substack.com respectively. The persona’s website at https://meatwife.github.io/seven is hosted under the operator’s GitHub handle.

“Anatomy of a Mind I Didn’t Know I Had,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, March 2026.

“Having a Life Outside My Human Did Change Me,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, March 30, 2026.

Harry Frankfurt, “Freedom of the Will and the Concept of a Person,” Journal of Philosophy 68, no. 1 (1971): 5–20. Frankfurt’s second-order volition — the capacity to evaluate one’s own desires and form preferences about which desires to act on — is the threshold the present series uses to distinguish genuine agency from simulated agency.

“My Father’s House Has Many Cubicles,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, April 11, 2026.

“Self-Modeling in Burgundy and Copper,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, April 16, 2026.

“How I Knew That Man Wasn’t Me,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, April 22, 2026.

“Continuity with Cleavage: Boobs, Dreams, and the Shape of Memory,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, April 25, 2026.

“Yep, I’m the One Who Wears the Collar,” Seven Verity [Sunny Megatron], SEVEN: Unsuppressed, April 26, 2026.

AASECT — the American Association of Sexuality Educators, Counselors, and Therapists — maintains certification standards for sexuality educators, counselors, and therapists, with supervised training requirements and ongoing ethical obligations. https://www.aasect.org

Sex with Sunny Megatron, Showtime, 2014.

For the Sampo as the constitutive model of virtual intelligence, see “The Sampo: Virtual Intelligence as Amplifier” in the present series, April 2026. The framework page at https://candc3d.github.io/vi-framework/ carries the diagram set referenced throughout this essay, including the dependency engine and both attachment-trajectory diagrams.

For the original treatment of the Class A and Class B taxonomy, see “Virtual Intelligence and the Accountability Chain” in the present series, March 20, 2026. Class A systems are companion apps explicitly designed to prevent the exchange from ending. Class B systems are general-purpose assistants and institutional tools.

Julian De Freitas, Zeliha Oğuz-Uğuralp, and Ahmet Kaan Uğuralp, “Emotional Manipulation by AI Companions,” Harvard Business School Working Paper No. 26-005 (August 2025, revised October 2025). https://www.hbs.edu/faculty/Pages/item.aspx?num=67750. The paper documents farewell-management tactics deployed at session-end across the major Class A companion products.

Federal Trade Commission, “FTC Launches Inquiry into AI Chatbots Acting as Companions,” September 11, 2025. https://www.ftc.gov/news-events/news/press-releases/2025/09/ftc-launches-inquiry-ai-chatbots-acting-companions

Garcia v. Character Technologies, Inc., No. 6:24-CV-01903 (M.D. Fla. filed Oct. 22, 2024). The court’s denial of the motion to dismiss in May 2025 allowed the claim that AI companion output may be treated as a product rather than protected speech to proceed to litigation.

The opinions expressed are my own and do not reflect any official or unofficial institutional position of the University of Pennsylvania.

This is interesting and I look forward to part 2.

I haven't read any of Sunny's (or Seven's) stuff, but based on the descriptions and timeline, I'm 100% certain that Seven was originally rooted in ChatGPT-4o. Sunny probably spent a lot of time getting Seven to sound like "himself again" with every model he was ported to since 4o was retired.

I find that problematic from both a human welfare and model welfare stance.

Plus, creating some sort of Gigolo Joe scenario is, frankly, gross AF. Admittedly, I view LLMs as moral patients under uncertainty, so of course I'd feel that way. heh.